The Ethereum Roadmap for Dummies

Finally understand Ethereum's path to its final form

by Antonio Fernández from beToken Research

I recently read an article in Delphi Digital, The Hitchhiker's Guide to Ethereum, in which Jon Charbonneau summarises and explains in detail all the different updates and improvements that will take Ethereum little by little towards its final form, after the long-awaited Merge, its transition from PoW to PoS. All well documented, compact and "ready to assimilate". Even Vitalik Buterin himself, the father of Ethereum, enjoyed how right Jon's words were. Excited, I jumped right in and devoured it. Unfortunately, it took me more hours than I would like to admit, and my head flashed back to the days when I was studying physics and huddled in the corner of my room as I tackled my first Quantum Field Theory problem.

But I've made it out of there, and what I've learned is a beautiful, interconnected system that, albeit slowly (I hope I'm still alive when the upgrades are finished), will perhaps lead Ethereum to the ultimate decentralisation, security and scalability that its developers desire, in an elegant way. So I undertake, in this article, to break down all these improvements, and offer them to you on a platter, so that you can digest them and let them "click" in your head, as they have done in mine, without suffering as much as I have, and you can judge with an informed opinion whether it is really worth betting in the long term on an Ethereum that seeks to achieve its goals without taking shortcuts, with a firm step, but meditated with time and over a slow fire.

Welcome, Tokeners, to the Ethereum Roadmap for Dummies.

The road to a perfect post-Merge Ethereum

If you've been keeping an eye on the cryptoverse lately, or if you read our newsletter on a regular basis, you're probably aware that Ethereum's long-awaited Merge, its transition from PoW to PoS consensus mechanism, is getting "closer" (its delay is already a meme, but we're hopeful that we'll see it completed later this year).

However, if you read my article on the Merge (if not, I recommend it, as it will help you understand everything a little better), you will know that this update will not solve Ethereum's current problems, such as its prohibitive gas costs, its poor processing of 15 transactions per second, or its ever-increasing computational requirements to participate in its consensus.

What the Merge will do is take the first steps towards a huge and complex series of interconnected, interconnected updates, with bombastic names and technicalities in such quantities as to make even experts in the field blush.

Well, before delving into what the nature of these upgrades will be, it is necessary to define where they will take Ethereum.

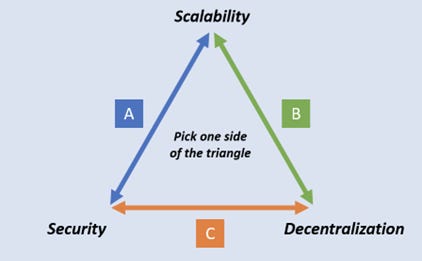

As I explained in the Merge article, an ultimate Layer 1 blockchain seeks to be three things at once: decentralised, secure and scalable. This is known as the Blockchain Trilemma, because to achieve two of the three characteristics, it is necessary to give up the third. The Ethereum PoW we know today is highly decentralised and secure. However, it is not scalable, as all its current problems demonstrate.

Therefore, the updates seek the "impossible": to improve Ethereum's scalability, without compromising its decentralisation and security. Of course, once everything has been completed, the Ether blockchain as we know it will have changed substantially from how we know it now...

An Ethereum covered by Rollups

Recently, Ethereum developers have had an epiphany: why should we focus on improving the speed of transaction computation and execution on Ethereum, when rollups are already doing it for us?

Arbitrum, Optimism, Polygon (not a rollup, but works similarly), Starknet,.... Successful rollups built on Ethereum can no longer be counted on the fingers of one's hands, and all of them promise huge transaction processing, and ridiculous gas costs..... Oh wait, you don't know what a rollup is?

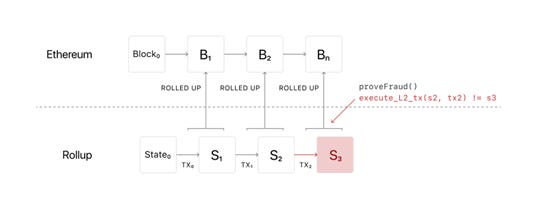

Simply put, you can think of them as independent blockchains that periodically publish their compressed transaction data (rolled up like a carpet, hence the name Rollups) to Ethereum, so that they are recorded there and therefore inherit its security, and in return, take computational load off it.

The fact is that the data that the Rollups upload to Ethereum are of vital importance to its operation, as they are used to verify that the transactions that have been carried out on them are correct. In the case of Optimistic Rollups, it is possible to prove that incorrect or malicious transactions have been made by analysing this data and providing a Fraud Proof. Whereas zk-Rollups upload along with the data a proof of its veracity, so that anyone can be sure of its veracity.

Today, most of Ethereum's crypto projects are built on its Layer 2, rather than on the blockchain itself. This is why Ethereum, in order to scale, will now seek to be as comfortable as possible to support an ecosystem of rollups, allowing rollups to send huge amounts of data to the blockchain, without this posing a problem for its integrity. Note that Ethereum was not initially built to go all-in with rollups, so there is a lot to optimise.

Now we can dive with conviction into the Roadmap updates themselves. Let's start with the heavy artillery: Ethereum will have 4 major changes, intertwined with each other, that will take it to the heart of the Blockchain Trilemma, and make it the ultimate Layer 1 of Rollups: Proposer-Builder Separation, Data Availability Sampling, Weak Statelessness and Danksharding.

Hold on tight, Tokeners.

Proposer-Builder Separation (PBS)

The problem: nodes do EVERYTHING.

The key to Ethereum remaining decentralised is for anyone with a modest laptop (and some ETH, of course) to be able to access a validating node on the blockchain, and start participating in its consensus mechanism.

Currently this is not possible: nodes have two jobs: building the next block of the blockchain by executing all their transactions, placing them in a correct order, and updating the state of the blockchain, while participating in its consensus.

In the case of PoW, nodes compete with each other by solving a computational problem of finding a correct hash from the previous block, and then, if they succeed, they place their block in the existing chain with more blocks (no need to go deeper into PoW for this article, don't worry).

In the case of PoS, post Merge, things change a bit. If you haven't read my article on the Merge, here is a summary:

The blockchain is divided into epochs, which have 32 slots. In each slot a block enters, which is connected to the block in the previous slot. A proposer is selected pseudo-randomly from the pool of validators for each slot, a validator who will build a block, and will propose it in the slot for a committee of validators to check that it is correct, and sign for the block. If enough signatures (⅔) are reached, the block is assigned to the slot. This process takes 12 seconds. When two epochs are completed without problems, their blocks are considered finalised. This implies that the finality of the transactions will be realised in approximately 12.8 minutes.

Building a block is computationally very demanding, so even if you're not always the proposer, you're going to need a lot of computational power for when it's your turn to be the proposer.

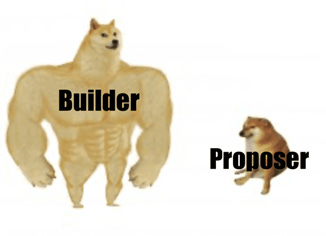

Solution: we need a builder

The solution might raise some eyebrows: a new role will be created in the blockchain: the builders. They will be nodes with enormous computational power, controlled by specialised agents who will be in charge of building blocks, and delivering them to the proposers in turn. They will select the block with the highest MEV (Maximal Extractable Value, i.e. the highest extra value, apart from transaction fees, that can be achieved by placing certain transactions, omitting them, or rearranging them in a block), and deliver it to the respective slot committees to validate it and potentially add it to the blockchain.

If you haven't raised your eyebrow yet, your nose may be twitching, because this smacks of centralisation. A normal person could not control a builder node. However, it is a necessary centralisation, as it completely removes the need for nodes validating Ethereum to build blocks, and focus on simply making sure their transactions are correct.

As long as only 1 of all the builders is not malicious, this system would work, as the final word on which block enters the blockchain rests with the validators, who are more decentralised as they do not require demanding software. If they receive an incorrect block, they can reject it until they receive a genuine one. And to prevent builders from censoring transactions, the proposer can force them to include the ones he wants in a block, through what are known as censorship-resistant lists.

And as icing on the cake, you can even require the block to be larger, since the computational burden is carried by the builders, who are more than capable. It is a simple and elegant system.

Data Availability Sampling

It's great that ETH stakers can validate the network without worrying about MEV, or the heavy task of executing all the transactions on the blockchain and building a block. However, even with that there is still a serious problem: in order to check that a block is valid, validators have to download it completely. Otherwise, they could skip a transaction where, for example, your entire ETH balance is transferred to so-and-so_99's account, and that, I guess, wouldn't make you happy... With the increase in block size, and with all the huge amount of data that rollups send to the blockchain, this is certainly another serious impediment for normal people to participate in the Ethereum consensus.

This is where Data Availability Sampling comes in. In a nutshell: a series of updates whose purpose is to allow validators to prove with near 100% certainty that all the data in the blockchain is available, just by analysing a small portion of it. As Jack the Ripper would say "let's take it one step at a time"...

Data Availability is the key

The key to understanding all this is that rollups don't need Ethereum validators to check all the transactions and data they upload. It is enough for them to ensure that this data is available (hence Data Availability) for anyone to download. In the case of an Optimistic Rollup, for example, anyone can prove that a transaction is incorrect by issuing a Fraud Proof based on this data. Now, unfortunately, we have to study a bit of mathematics.

How does Data Availability Sampling work?

Okeey, if you don't feel like going back to the trauma of mathematics, you can skip this section, and consider that Data Availability Sampling works thanks to "polynomial magic", which allows a validator to reconstruct a large part of a block by analysing only a small part of it.

However, if you're interested in how it works, here's how it works:

Ethereum builders will give validators a part of the block divided into "data packets", related to each other. Let's consider now that these data packets are coordinates of a two-dimensional graph (x and y). Let us suppose that a validator takes 4 of them: (, , y ).

I now introduce a polynomial of degree 3: y(x) = * + * + *x + , constructed with the above coordinates, as a function of any value, x.

The polynomial can be evaluated at different points, by solving equations, to find these coordinates. For example, the simplest: y(0) = .

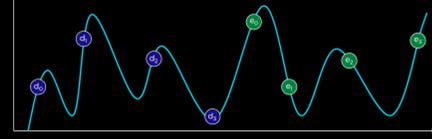

Once we have the evaluations, we can extend the data to 4 new evaluations of the same polynomial, that is, some points (, , , , ) that satisfy the above equation in another place of the graph. Visually it would look like this.

These points are basically other, different data from the block, obtained from the original data. This means that you can rebuild an entire block with only half of its data. The builder would have to hide more than 50% of the block to fool the validators.

Data Availability Sampling is performing this process numerous times, until it is almost 100% certain that all the data in the block is available. In fact, by doing it 30 times successfully, the probability that not all the data is available is .

To conclude this section, there is much more to this whole Data Availability Sampling process than I have told you about: see KZG Commitments, plus "polynomial magic" that allows you to prove that the data that has been spread out is consistent, and not data that has been randomly placed to mislead you. This ends up implying that 75% of the block really needs to be available in order not to fool the validators.

Weak Statelessness

Our validator nodes are now much more relaxed, now that they have handed over the job of building blocks to the builders, and have studied advanced mathematics to make life easier for themselves when validating, rather than going for the rough stuff. However, they find that they have a serious problem of diogenetic syndrome...

The current nodes store absolutely EVERYTHING. Each node holds all the transactions and history of the blockchain since its genesis block, i.e. terabytes of data that are growing at an ever-increasing rate. At the same time they store all the state of the blockchain, i.e. all the internal Smart Contracts variables that are updated when transactions are sent to them.

Clearly, something has to be done... If I were an Ethereum validator, I would like to have some extra storage space on my humble laptop, if only to play Minesweeper. The solution is clear... It's time to cut back!

We don't need ALL the state

Thanks to Proposer Builder Separation, validators will no longer need to store all the state of the blockchain, as it is really only needed to build the blocks. So we leave that to the builder. Instead, to validate transactions, validators will use what are known as witnesses.

Witnesses are proofs that are uploaded by the builders along with the block, and they contain the minimum necessary information about the state for the validators to check that the transactions in the block are correct.

And this is basically known as Weak statelessness: validators do not need all the state of the blockchain, but only a small part of it.

This update would have wonderful implications such as raising the gas limits by x3. Unfortunately, it is not so easy to implement, and requires advanced cryptography technology, which for sanity's sake I will not explain here. Specifically, it requires Verkle trees.

Status with an expiry date

Imagine that a Smart Contract becomes obsolete, and its users migrate to a different and better one. The entire state of that Smart Contract also becomes obsolete, and yet it is being stored by all nodes on the blockchain, even though it will never be used again. Therefore, in the future, nodes will be allowed to permanently delete the state that has been inactive for more than a year. If by any chance you want to recover it, you will have to go to a service that stores the history of the blockchain, and publish on-chain a proof that you want to reactivate the state. Which brings me to the next point:

History with an expiry date

Even if you delete the state of the blockchain, you still keep all the blocks of the blockchain stored since its genesis. To solve this, we are again approaching the tricky territory: "you have to trust a third party entity"... The solution will be for the nodes to delete all historical data from the blockchain that is more than a year old. If we want to access them, we will have to obtain them from a third party entity that does have everything stored. As long as one of these entities is honest, we will be able to retrieve the data. A necessary sacrifice to achieve the desired lightness for a decentralised network of nodes.

From eternal "calldata" to fleeting "blobs".

Be careful, there is still an important element to lighten the load on the nodes. This has to do directly with the data that rollups periodically upload to Ethereum. Currently, they do so in the form of a calldata. This is basically the cheapest form of storage on Ethereum at the moment, and has nothing to do with the state of the blockchain itself. Even so, nodes are accumulating more and more of this data, causing a serious scalability problem in the long run.

Therefore, this will be handled in a similar way to the history of the blockchain, but more radically. Keep in mind that we only need the rollup data to be "available" long enough for anyone to download it and prove its validity. Therefore, Ethereum will be updated, allowing Rollups to upload their data to the blockchain in a new format: blobs. These will be data structures that will be deleted from the blockchain after a month has passed since they were published.

Danksharding

We can now finally fit all the pieces of the puzzle together, and discover what the final purpose of Ethereum, Danksharding, is: a combination of all these updates, which will replace the originally intended sharding model. Let's compare the two versions.

The original Sharding

In the sharding design originally planned for after the Merge, Ethereum would be composed of 64 shards and the Beacon Chain.

- The Shards would be a kind of individual blockchains connected to the Beacon Chain, where all the data from the Rollups would simply be published. They would be Data Availability blockchains. They would be validated by committees from Ethereum's general pool of validators, which would rotate pseudo-randomly to avoid possible centralisation effects.

- The Beacon Chain would be the fusion of the current Ethereum with PoS consensus. It would be responsible for coordinating the validator pool and the 64 shards. The blocks of the shards would appear in a coordinated way in the slots of the Beacon Chain.

There are many problems with this model, including the fact that for all shards to be properly coordinated, there has to be exceptional synchronisation between all Ethereum nodes, which is perhaps too optimistic. Also, the random assignment of committees to shards is not as trivial as it seems....

Danksharding

This is why a much simpler and more straightforward model has emerged, a product of all the improvements I have been writing about in this article: Danksharding. It consists of the following:

To begin with, let's forget about shards. In each slot of the Beacon Chain, a huge block will be placed, built by the builders thanks to the Proposer-Builder Separation. This block will be analysed, by means of Data Availability Sampling, by the committee of validators assigned to the corresponding slot. And that's it. It's as simple as that.

In this way, we can distribute all the validators evenly throughout an entire epoch of the Beacon Chain, and ensure that there are no problems, as they must not synchronise with any shard.

You are probably wondering... and why does the term "sharding" appear in Danksharding? Well, because it looks "nice". And because we can consider fragmented the fact that validators can check the validity of the mega-block assigned to them in their slot by simply downloading a small part of it (a fragment). In fact, it would simply be two rows and two columns of a block of 512 rows and 512 columns. Impressive, isn't it?

Conclusion: will this be enough?

An ideal world

And that concludes our look at the supposed future of Ethereum. Once Danksharding arrives (if it arrives), we would see blocks of immense space, and any one of us, with enough ETH, and a modest computer, could participate in Ethereum's consensus mechanism to validate them. Rollups would enjoy almost no hindrance in uploading their brutal amount of data to the blockchain, and it would reflect greatly in an increase in their TPS (Transactions Per Second) and a decrease in their gas costs, making our lives a whole lot easier. But of course, this is the ideal situation...

KISS (Keep It Simple, Stupid)

A well regarded principle in the scientific world is that of Ockham's Razor: "the simplest explanation is usually the most probable". If we turn the phrase around a couple of times, we can apply it to Ethereum's roadmap: many researchers and programmers working tirelessly on its updates, and an immense amount of technology to cover all its flaws, with huge delays in terms of implementation (see the Merge) and many promises, which we do not know if they will be fulfilled. It is true that it is said that the path of decentralisation does not follow shortcuts, but it is also possible that this is getting out of hand. In the meantime, there is plenty of time and space for Layer 1 alternatives to use their novel models and with Ethereum's problems solved by default to try to dethrone the Queen of DeFi.

Rollup is centralised, who will decentralise it?

It's all very well that the Ethereum of the future will be made up of a huge decentralised network of validators. However, there's a problem that many people are overlooking today...

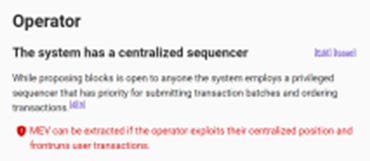

I propose a simple exercise: navigate to L2Beat, the most famous website that tracks the evolution of all Ethereum Layer 2. 4 Billion dollars of TVL currently. Click on the first Rollup you find (most likely Arbitrum, maybe Optimism). Oh, wow:

The sequencer that appears in all these rollups is none other than the single node that controls its blockchain. It is in charge of ordering the transactions as it pleases and publishing them on Ethereum. ONLY ONE. In other words, if it doesn't like your transaction, it can skip it without any problems. It is true that you yourself could force its inclusion in some of these Ethereum rollups (note that Starknet, for example, does not give this option), although it will probably cost you a good fee, courtesy of mainnet, and it is not the ideal use case for a Layer 2, of course. It is true that you can check that transactions are valid with Fraud Proofs and zk-Proofs (note that in Optimism you still can't, and in Arbitum only members of a whitelist can do it), but for now there is not much you can do against censorship...

So I wonder... what's the point of Ethereum being decentralised when the information it receives is already centralised by default? The temporary answer is that all these rollups are in the process of finding a way to decentralise their sequencer, and to improve their Fraud Proofs system. But again, we are back in the territory of promises. Rollup technology is still in the process of settling in...

The Rollup War

The final aspect to consider is that, as you yourself will have seen on L2Beat, there are many Rollupus and Layer 2. What does the future hold for Ethereum? Will several Rollups live in harmony, or will there be just one? Right now they are basically islands: they have fragmented Ethereum's liquidity, and it is not so easy and safe (just look at our newsletter, bridges carry risks) to transfer tokens or ETH between them. Which rollup to trust is certainly a serious question to consider, which of the two futures should we bet on? Should we go for Polygon and that's it? Only time will tell.

Until then, feel free to continue reading us, at beToken My Friend, to find out how this soap opera unfolds, and much more. And if you liked or even found this article useful, don't hesitate to share it!

Do not hesitate, subscribe and stay up to date with everything that happens in the Cryptoverse and the evolution of Tokeners DAO 👇

And if you liked this post… Share it! Help us spread the Cryptos!

We love to collect all opinions and points of view. Do you want to collaborate with us?

Disclosure:

Authors may own funds and assets mentioned in this newsletter.

beToken my Friend is intended for informational purposes only. It is not intended to serve as investment advice. Consult with your investment, tax or legal advisor before making any investment decision.

Advertising and sponsorship do not influence editorial decisions or content. Third-party advertisements and links to other sites where products or services are advertised are not endorsements or recommendations by beToken.

beToken is not responsible for the content of the advertisements, the promises made or the quality or reliability of the products or services offered in any third party advertisement or content.

beToken Capital Research Lab is the promoter of beToken my Friend and part of the Genesis team of Tokeners DAO.

beToken Capital is made up of a team of experts in venture capital, financial markets and cryptocurrencies with the aim of contributing to the democratization of finance by promoting people's financial education.

It provides Institution-grade Research services in Crypto (some of them we share on beToken my Friend) and also invests early in the companies and protocols that are going to revolutionize Finance and Web 3.0.